7,982 words

Half of my book, The Uniqueness of Western Civilization, is about discrediting the multicultural claim that, as late as the mid-1700s, the West was no more advanced than the major civilizations of Asia, or China in particular, and that only a set of fortuitous circumstances gave the West a chance to industrialize first. The West did not “stumble” accidentally into the New World, I argued, and it was not “easy access” to the resources of the Americas, enslavement of blacks, or availability of cheap coal in Britain that made Britain’s takeoff possible.

Columbus’ voyages were one among many other European explorations, starting with the organized expeditions of the Portuguese around Africa and into the Indian Ocean in the 1400s. During the 1500s and 1600s, thousands of Europeans set about discovering and mapping the whole world for the first time in human history. While the acquisition of resources from the Americas and the colonial trade did affect the timing, magnitude, and rate of industrial growth, the Industrial Revolution occurred first in Britain because of this nation’s freer markets, property rights, superior applications of modern science to industry, representative institutions, and a dynamic middle class imbued with a Protestant ethic. Many other European nations – Belgium, Switzerland, Germany, and the Nordic countries – would soon industrialize in the 1800s, with next to no colonies. Overall, the home market and the intra-European trade were far more significant than the colonial trade.

What I did that was new in Uniqueness was to argue that the rise of the West can’t be reduced to the Industrial Revolution, or even the preceding Galilean-Newtonian revolution. The West has always stood apart from the Rest as a singularly different civilization, since prehistoric times. The history of the West is filled with continuous “births,” “origins,” “creations,” “transitions,” “renaissances,” and “revolutions.” We can start with ancient Greece and the “world’s first scientific thought,” the “invention of deductive reasoning,” the “birth of citizenship politics,” the “emergence of historical consciousness,” and “the discovery of the mind.” But then we have to explain what made Greece so different. One predominant explanation connects the development of rational discourse to the rise of the polis or city-state beginning in the eighth century BC. Within the polis, Greek citizens were induced to participate in politics, to engage in criticism of opposing views and contest for their views and interests in a reasoned manner, rather than submit to a priestly or government hierarchy.

But why the emergence of the polis and the higher individualism of the Greeks in the first place? Some have pointed to the geographical distinctiveness of Greece, its mountainous ecology, which compartmentalized the land into separate valleys and encouraged the rise of small, independent city-states. The geographic uniqueness of Europe generally is always part of the explanation. There is no question that the greater environmental diversity of Europe – its multiple rivers and links to a wider variety of seas, coupled with the fact that its mountains, plains, and valleys are all “of limited extent”; that no great river or plain dominates the ecology; and that farmers can rely on rainfall rather than on centrally-controlled irrigation systems based on one, large river – encouraged less centralized political authorities.

But rather than viewing geography as the active historical agent, the way Jared Diamond and others do, I drew on Hegel to emphasize the deep effect this environment had on the “type and character” of European peoples. The peoples of the world belong to the same species, but their state of being – their mental vision, temperament, and character – is deeply influenced by their place of habitation on the Earth. I also went back in time to the prehistorical Indo-Europeans to argue that before the polis in Greece was established around the eight century BC, there were already aristocratic characters unwilling to submit to despotic rule. The Mycenaean civilization (1900-1200 BC) was uniquely aristocratic in the sense that “some men,” not just the King, were free to deliberate over major issues affecting the group, as well as free to strive for personal recognition. The material origins of this aristocratic individualist ethos are to be found in the unique pastoral lifestyle of the Indo-Europeans, who evolved out of the geographical area known as the “Pontic steppes.” They were the riders of horses, the inventors of chariots, and co-inventors of wheeled wagons, as well as the most efficient users of the “secondary products” of domestic animals (dairy products, textiles, harnessing), which gave them a more robust physical anthropology and the most dynamic way of life in their time.

I used the philosophical insights of four German thinkers (Spengler, Weber, Hegel, and Nietzsche) – specifically, their writings about the “infinite drive” and “the irresistible trust” of the Occident, the “energetic, imperativistic, and dynamic soul of the West,” the “rational restlessness” of Europeans, the “powerful physicality [of aristocrats] . . . effervescent good health . . . [love of] adventure, hunting, dancing, jousting and everything that contains strong, free, happy action” – to argue that only European man has exhibited an intense desire to subject the world to its own ends, and that it is mainly this self who has been unable to feel “at home” in the world until it got rid “of the semblance of being burdened with something alien” (Hegel’s words).

Why has the European mind shown less reluctance to accept “the ineffable mystery of the world”? Why have Europeans been less willing to accept a social order based on laws and norms which have not been subjected to free reflection? Drawing on Kojève, I argued that the ultimate origins of Western uniqueness are to be found in the reality that only Western man became “truly” self-conscious, because only this man created – in the environment of the Pontic steppes – a society in which the struggle to become a man involved a contest “for something that does not exist really,” that is, a contest solely for the sake of being recognized by another human being as a man exhibiting aristocratic excellence against the biological fear of death and against the fear of rebelling against the norms mandated by mysterious/despotic gods and rulers.

In all cultures, men have struggled for manhood and recognition by other men, but only among the aristocratic culture of Indo-Europeans do we find an incessant contest to validate one’s aristocratic status among one’s peers, for these nomadic, horse-riding warriors were not subservient to any ruler, but were possessed by an attitude of “being-for-self,” or self-assertiveness (rather than an attitude of “being-for-another” or deference towards a fearful god or despotic ruler). This contest had a profound effect on the constitution of the human personality, leading to the discovery of a unified self. This discovery was not, in the first instance, an intellectual affair, as bookish academics prefer to think; it was an intensively passionate drive for masculine identity in the pursuit of the highest form of recognition, aristocratic status, and for the sake of the highest ideals: honor, courage, and immortal glory.

Julian Jaynes and the Origins of Consciousness

This sketchy statement can’t be persuasive. Only a reading of Uniqueness, Faustian Man in a Multicultural Age, and my subsequent articles can stand up as a real argument. Recently, upon being asked to write an Introduction to an old translation of the Iliad, I dug deeper into my claim that only Europeans attained a consciousness of self as a distinctive agent with a faculty called “mind.” Drawing on Julian Jaynes, among others, I argued that prior to the Greeks, humans were not fully conscious; the minds of Mesopotamians and Egyptians were ruled by the voices of authorities – of gods, fear of repression, and rigid theocratic norms and inhibitions. Only with the Indo-Europeans, and then at a very rapid pace with the ancient Greeks, did humans began to overcome the dark world of mysterious forces and unquestioned norms enveloping their subjective consciousness. Consciousness is not a naturally given attribute acquired by humans through natural selection, as Darwinians assume. One can interact “knowingly” with nature in trying to survive. But consciousness involves introspection, awareness of one’s self as a generator of thoughts, and the ability to differentiate one’s mind from the enveloping world outside. Computers are super-logical, but they are not conscious. Only Europeans became conscious, and the causes of this consciousness need an explanation. The Iliad is the first work in Western literature, and it is the first work exhibiting signs of consciousness, introspection, and deliberation.

What is consciousness? This is one of the most difficult questions. Years of insistent research and thousands of publications by philosophers of the mind, neurologists, and cognitive psychologists have yet to produce a strong consensus. I disagree with those who identify consciousness with perception. Dictionaries generally define “perception” and “consciousness” using similar words. Perception is “the ability to see, hear, or become aware of something through the senses.” Dictionaries emphasize not just the sensing of things, but the “process of becoming aware of something through the senses.” The synonyms are said to be “recognition,” “awareness,” “consciousness,” and even “knowledge,” “understanding,” and “comprehension.” Similarly, dictionaries define consciousness as “the state of being awake and aware of one’s surroundings,” or “the awareness or perception of something by a person.”

But in the definition of consciousness, one will find a few different words, such as “the fact of awareness by the mind of itself and the world.” This distinction is important, since humans only began to talk about the mind as a separate faculty in ancient Greek times. Philosophers then started to identify a faculty they called nous which they contrasted with the senses, feelings, and gods, and which they said was the part of the human constitution, an immaterial part, responsible for thinking. Aristotle clearly distinguished this faculty from sense perception, imagination, and memory. He equated it with the intellect or intelligence, and argued that it was the source of the human ability to produce reasoned arguments. Distinguishing the mind in this way, and understanding that employing this faculty is necessary to produce true ideas, as contrasted to mere opinions, was itself an indication that men during Greek times had become self-consciously aware of themselves as subjects capable of reasoning on their own, and thus capable of producing truthful statements to be contrasted to ex cathedra pronouncements by priestly classes. Truthful statements could only come from men who know how to employ their minds in a self-conscious way.

I am approaching consciousness not as a naturally given attribute of the human species, but as a faculty produced in time by a particular people. Julian Jaynes’s book, The Origin of Consciousness in the Breakdown of the Bicameral Mind (1976), is the best book on this topic, because it was the first book written by a psychological expert who framed the “origins of consciousness” as a historical rather than as an evolutionary and a purely psychological phenomenon.

I am approaching consciousness not as a naturally given attribute of the human species, but as a faculty produced in time by a particular people. Julian Jaynes’s book, The Origin of Consciousness in the Breakdown of the Bicameral Mind (1976), is the best book on this topic, because it was the first book written by a psychological expert who framed the “origins of consciousness” as a historical rather than as an evolutionary and a purely psychological phenomenon.

According to Jaynes, humans became conscious sometime after 1000 BC. Before this time, humans were constituted by a “bicameral mind” wherein habit, traditions, or the voices of gods and rulers dominated their thoughts. Jaynes explained that humans who are not conscious can think and perform complex tasks. Consciousness is not necessary for thinking. We play the piano and drive cars without being conscious of the performance of these activities. Jaynes’ definition of consciousness amounts to “consciousness of consciousness.” We are conscious when we are aware of our awareness. A bicameral mind is a mind that does not consciously think about its own thinking, and is thus lacking in subjective deliberation. It is a mind that does not recognize its own agency as the progenitor of thoughts. It is a mind that operates habitually under the command of external powers or the external voices of gods who must be obeyed.

Jaynes’s book was very well received, and today there is a society in his name. But among establishment cognitive psychologists, he is barely mentioned – both because he was an unassuming English gentleman without a tribal media network pushing his ideas, and because new findings in neurology discredited the neurological part of his “bicameral mind” concept. Some concluded he confounded the origins of consciousness with the emergence of the concept of consciousness. They argued that humans are naturally conscious, and have been conscious through history; what happened at some point in time is that philosophers began to discuss the concept of consciousness and to write about it. Psychologists were also impatient with Jaynes’ claim that consciousness was constructed at some point in history. His ideas could not withstand the growing influence of evolutionary psychology and its argument that consciousness is altogether a product of natural selection.

Jaynes’s own historical explanation did not make it any easier. For him, consciousness began sometime around 1400-600 BC, when men were compelled by the chaos of wars, catastrophes, and national migrations induced by overpopulation, as well as by the widespread use of writing, to question their bicameral mentality and to voice their own internally-generated thoughts. He emphasized how the development of writing in the second millennium, combined with the weakening and collapse of theocratic empires, as well as the intermingling of peoples from different nations with different beliefs, weakened the “auditory power” of the gods and the rigid norms occupying the brains of peoples during this epoch. Jaynes did not mean the type of writing that was first invented in Mesopotamia around 3500-3000 BC as an inventory device – as a way of recording the collection of taxes and god-commanded events. He meant the writing found in the “narratization of epics,” in which an “I” of ourselves “doing this or that,” and thus making decisions on the basis of internally-generated subjective outcomes, made an appearance. He further explained that human introspection and self-visualization first emerged through the making of metaphors and analogies, metaphors of “me” and of “analog I.” Humans came to experience consciousness of themselves as generators of their own thoughts only when they had developed a language sophisticated enough to produce metaphors and analogical models – that is, figures of speech containing an implied comparison, in which a phrase or word commonly used in one domain of knowledge, or in reference to one thing, is applied to express an idea in another domain, or in reference to another thing, in which some similarity exists.

His explanation of metaphors was very insightful. I will return to this subject later. What’s perplexing about Jaynes’ historical account is that, while he correctly said that Greek literature after the Iliad became a literature of consciousness, starting with the Odyssey itself, he used the Iliad as a prototypical example of a people with a bicameral mind, whereas I believe the Iliad was a transitional work exhibiting the first clear signs of free deliberation and introspection, a work packed with some of the best metaphors in Western literature. If one reads carefully between the lines, one senses that Jaynes’ examples of consciousness outside the Greek world, or prior to the Greek world, are not very strong, whereas his examples of consciousness after the Iliad are solid. Even in the case of the Iliad, actually, Jaynes acknowledged the presence of some passages in which the protagonists do make decisions as subjects with their own volition.

I have defended this argument in a forthcoming Introduction to the Iliad, and will not elaborate further. Bruno’s Snell’s book, The Discovery of the Mind: The Greek Origins of European Thought (1953), has been mentioned before at the Council of European Canadians as a work that explores the growing appreciation of the inner self in Greek literature and philosophy after 650 BC, but he too has difficulties denying volition in the Iliad. When all is said, it is hard to deny that a feeling of self, of the difference between the inner and outer, a capacity of the subject to achieve self-control over his emotional impulses, is an account that can only be made in reference to ancient Greek culture, and not in reference to any other culture outside European lands, even to this day. It is true that most classic scholars today are preoccupied with highly specialized subjects inside an academic world that sees ancient Greece as no more than a “Mediterranean culture” among numerous “intertwined” cultures from Africa and the Near East. But there is a recent work of close to 600 pages that travels the same intellectual path as Snell, detailing how the Greeks, from the Odyssey to Socrates, became conscious beings: Keld Zeruneith’s The Wooden Horse: The Liberation of the Western Mind, From Odysseus to Socrates (2007). I will write about this book another time.

Steven Mithen and the Origins of Cognitive Fluidity

Now I want to go back in time by examining a very original work by archaeologist Steven Mithen, The Prehistory of the Mind: The Cognitive Origins of Art and Science (1996), which synthesizes a vast literature to argue that humans become conscious, or, as he also puts it, achieved “cognitive fluidity” sometime between 60,000 and 30,000 years ago. Homo sapiens sapiens, the first modern humans, appeared 100,000 years ago, but only 40,000 years later did human consciousness arise. Mithen deduces that consciousness emerged in this period, the Upper Paleolithic era, because of the incredible cultural and technological efflorescence witnessed during this relatively short period after millions of years of stagnation.

Now I want to go back in time by examining a very original work by archaeologist Steven Mithen, The Prehistory of the Mind: The Cognitive Origins of Art and Science (1996), which synthesizes a vast literature to argue that humans become conscious, or, as he also puts it, achieved “cognitive fluidity” sometime between 60,000 and 30,000 years ago. Homo sapiens sapiens, the first modern humans, appeared 100,000 years ago, but only 40,000 years later did human consciousness arise. Mithen deduces that consciousness emerged in this period, the Upper Paleolithic era, because of the incredible cultural and technological efflorescence witnessed during this relatively short period after millions of years of stagnation.

Between 2 and 1.5 million years ago, Homo habilis, H. rudolfensis, and H. ergaster managed to create stone hand choppers with a brain size between 500 and 800 cc. Between 1.8 and 400,000 years ago, H. erectus, with a brain ranging between 750 and 1250 cc, managed to create stone handaxes. This handaxe would remain the core technology until the Upper Paleolithic revolution. Between 400,000 and 100,000 years ago, archaic H. sapiens and H. heidelbergensis, with a larger brain size of about 1100-1400 cc, continued to create the same stone handaxes. By 150,000, H. neanderthalensis appears in Europe, and he, too, uses handaxes, though with a new “Levallois” technique for removing flakes. He has a brain size of 1200-1750 cc. Then, H. sapiens sapiens appears around 100,000 years ago, with basically the same brain size, and he, too, uses them, though there are “hints of something new,” the “very first tools of materials other than stone and wood.” But, all in all, H. sapiens sapiens “continues making the same range of tools as his forebears” (22) until about 60,000 years ago.

From this time on, starting about 60,000 years ago, “with no apparent change in brain size, shape or anatomy in general,” there is a “cultural explosion.” Blade production starts on a “systematic scale,” with blades often chipped as projectile points and chisel-like engraving tools; bone is carved to make points and awls, harpoons and needles. Tools are designed for specific purposes and multicomponent tools are made. The first art objects also appear after 40,000 years ago: beads, necklaces, and pendants are made from ivory, figurines are carved, and abstract and naturalistic images are painted and engraved on cave walls.

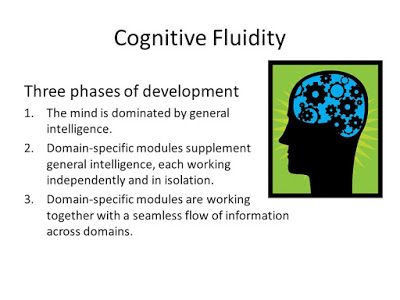

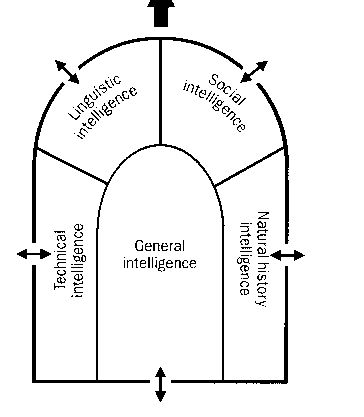

Why this sudden explosion if brain size remained the same and human beings, H. sapiens sapiens, were already around? Mithen draws on the research findings of cognitive psychologists to argue that the Upper Paleolithic “revolution” was the result of a change in the architecture of the mind, characterized by a new ability among humans to make connections between previously separate cognitive modules. You will recall from my article, “The Multiple Intelligences of Whites,” Gardner’s seven intelligences: linguistic, logical-mathematical, musical, spatial, bodily-kinesthetic, and the two forms of personal intelligences. Mithen relies on Gardner’s arguments, together with Jerry Fodor’s The Modularity of the Mind (1983), Leda Cosmides and John Tooby’s The Adapted Mind (1992), and other publications, to construct his own argument that, in the course of evolution, starting with the ancestral ape some 6 million-4.5 million years ago, through the first australopithecines dating to 4.5-1.8 million years ago, to the first members of the Homo lineage 2 million years ago, all the way to H. sapiens sapiens, a variety of intelligences evolved in complexity. Mithen first sees a “general intelligence, which includes modules for trial and error learning, and associative learning” (88) among ancestral apes (and contemporary chimpanzees). This is an all-purpose intelligence used for survival involving foraging decisions, tool use, and basic perception and awareness of the environment through the senses. What happens in the course of evolution is that new, specialized modules of intelligence began to emerge over and above the all-purpose intelligence: social, linguistic, natural history, and technical intelligences.

Mithen agrees with evolutionary psychologists Cosmides and Tooby that “we can only understand the nature of the modern mind by viewing it as a product of biological evolution” (42). He likes their metaphor of the human mind as being a Swiss army knife “with a great many, highly specialized blades,” each of which was “designed by natural selection to cope with one specific adaptive problem.” He agrees that these blades or modules are “hard-wired into the mind at birth and universal among all people” (43), although he is sympathetic to Gardner’s view that the cultural context in which children grow influences the intelligences. But he thinks the metaphor of a Swiss army knife can only take us so far in understanding the H. sapiens sapiens mind that emerges during the Upper Paleolithic era. He likes Fodor’s suggestion that cognition is inherently “holistic,” or based “on the integration of information from all input systems.” Mithen brings up a few lines from Fodor stating that cognition is a unified process, not encapsulated; “its creativity, its holism and its passion for the analogical” (Fodor’s words) is what characterizes cognition. Mithen prefers how Gardner stresses the interaction between the intelligences from the start. He brings up some sentences from Gardner stating that “in normal human intercourse one typically encounters complexes of intelligences functioning together smoothly, even seamlessly, in order to execute intricate activities.” Mithen takes up this idea about building up connections across the different intelligences, “as exemplified in the use of metaphors and analogies,” to explain why humans become so creative during the Upper Paleolithic era. Basically, a mind with a new architecture evolved, capable of making analogical connections between the linguistic, social, natural history, and technical intelligences.

Starting with an all-purpose general intelligence, the first specialized intelligence Mithen detects among our ancestral apes is an emerging module for social intelligence. This intelligence (akin to Gardner’s personal intelligences) is defined as the ability to predict the behavior of others in order to augment our reproductive success. Among primates, this module is already highly evolved, as exemplified in the way primates actually use “deception and the construction of alliances and friendships” in the pursuit of reproductive success, exhibiting “extensive social knowledge about other individuals, in terms of knowing who allies and friends are, and the ability to infer the mental states of those individuals” (82-3). It is very revealing, though, that Mithen says this in passing without making the most of it – namely, that the one module that first emerges in a highly intelligent way among primates, social intelligence, is the one pointing towards the unification of all the other intelligences, and indeed the one that involves, as an intrinsic component of its nature, conscious awareness, because this intelligence is about reflecting how we feel and behave in a particular situation in order to reflect about how another individual will feel and behave in a similar situation. Mithen thinks that our ancestral apes and present-day chimpanzees, but not monkeys, already had a “conscious awareness of their own minds,” and a concept of self.

But later he correctly qualifies this assessment by adding that chimps had a low level of self-awareness. Full self-awareness comes with H. sapiens sapiens, when all the other modules are fully evolved and the mind acquires an ability to make analogical connections between all the intelligences. There are signs of an incipient natural history intelligence among chimps. This intelligence is all about understanding the world in the struggle for life: perceiving the migratory movements and behavioral traits of other animals, the range of environments, and predicting resource locations and scavenging opportunities. Chimps have detailed knowledge of the spatial distribution of resources and the ripening cycles of many plants; however, they don’t exhibit a “creative or insightful use of that knowledge.” Their natural history intelligence appears to flow out of “rote memory.” One can say it is instinctive, likely rooted in their all-purpose general intelligence. (This concept of natural history intelligence has traits similar to Gardner’s spatial intelligence insomuch as it is about geographical-spatial comprehension of resource locations. It is also close to another, eighth intelligence, Gardner added later, “naturalistic intelligence,” which environmentalists, and even Gardner, interpreted in a progressive way.)

Mithen does not think that tool use by chimpanzees indicates their minds had started to evolve a specialized module of technical intelligence. Their tool use simply relied on trial and error, rooted in their all-purpose general intelligence. Technical intelligence is exhibited in the ability to manipulate and transform natural objects into manufactured tools, showing understanding of the physical properties of objects. This intelligence is akin to Gardner’s logical-mathematical intelligence, but rather as it manifested itself, I would say, in the less intellectually developed, but basic, survival reality of hunting-and-gathering peoples.

Mithen also does not buy the argument that chimpanzees had a linguistic capacity. A specialized module for technical intelligence really makes its appearance during the Homo lineage, when actual stone tools are constructed. What may seem like a simple chopper requires recognition of “acute angles on the nodules, to select so-called striking platforms and to employ good-hand-eye co-ordination to strike the nodule in the correct place, in the right direction, and with the appropriate force” (96). During this evolutionary lineage, the natural history intelligence grew in sophistication, and so did the social intelligence. Mithen says this intelligence was likely selected as the number of social relationships within and between groups grew, requiring more thinking about alliances. He agrees with research suggesting that a specialized intelligence for language likely evolved as a means for exchanging social information “within large and socially complex groups, initially as a supplement for grooming, and then as a replacement for it” (111). He thinks it was only with the appearance of archaic H. sapiens and the Neanderthals, between 400,000 and 100,000 years ago, that the expansion in brain size reflected the evolution of “a form of language with an extensive lexicon and a complex series of grammatical rules” (144).

Once we get to the Neanderthals, we have a mind with highly developed, specialized modules for all the intelligences, including a brain with the same size as contemporary humans. But there is a dilemma: Neanderthals remained extremely conservative in their technology. Why did they ignore bone, antler, and ivory as raw materials? Why did they not make tools designed for specific purposes, and multicomponent tools? Why did they fail to develop any art? Why did they lack any beliefs in supernatural beings? Mithen’s answer is very insightful. There was no “cognitive fluidity” between social, technical, linguistic, and natural intelligences. He uses the metaphorical illustration of a cathedral with four chapels of specialized intelligences, with a central part, or “nave,” of the building representing general intelligence. The weakness in the mind of Neanderthals was that the chapels were closed to each other, there were no doors or passages of communication; the walls of each chapel (except for the door between language and social intelligence) were thick and impenetrable to the insights of the other chapels. Only during the Upper Paleolithic era did the intelligences began to function seamlessly together. Only then the mind acquired a capacity for analogical and metaphorical thinking. Only then do we witness the emergence of a central processing system engaged (to use the words of Fodor) in “the transfer of information among cognitive domains” previously operating independently of each other. Thinking was no longer “encapsulated” (The Modularity of the Mind, 107).

Neanderthals could not make tools from bone, antler, or ivory because these materials came from animals which had always been thought about within the domain of natural history intelligence, whereas the stone physical objects that were transformed into handaxes had always been thought about within the domain of technical intelligence. Only when these two domains began to communicate with each other were humans able to conceptualize the idea that animal materials could be subjected to cognitive processes previously limited to the technical domain. Likewise, making specific tools for specific tasks – say, projectiles to kill deer – required not only technical intelligence, but also natural history thinking about the deer’s anatomy, migratory movements, and hide thickness.

The reason Neanderthals did not make artifacts used for body decoration, such as beads, pendants, and perforated animal teeth, is that there was no cognitive fluidity between the social and the technical/natural history modules. H. erectus, Neanderthals, and other Early Humans, in Mithen’s estimation, were as socially intelligent and as Machiavellian in their tactics to gain social advantage as Modern Humans. Making these objects required open doors between technical and natural history intelligence, and between these two chapels and social intelligence, since body decoration is all about communicating social status and group affiliation. Similarly, Mithen explains how anthropomorphism in art, and the totemic belief that humans have a mystical relationship with an animal, require fluidity between thinking about animals (natural history intelligence) as people (social intelligence), and thinking about people as animals. Art, that is the creation of artifacts/images with symbolic meanings as a means of social communication, requires the integration of the natural history idea of hoofprints as a referent of a particular animal with the idea of intentional social communication and the idea of technically producing artifacts from mental templates.

What about the integration of linguistic intelligence? While Mithen is not as explicit as he should be, this intelligence evolved as a specialized chapel, but from the beginning the doors of this chapel were open to the social intelligence chapel, since it evolved directly out of the domain of social intelligence. Strictly speaking, linguistic ability came late in evolution; H. erectus only produced “a wide range of sounds in the context of social interaction” to communicate feelings of anger or desire. Only with Modern Humans can one say with certainty that language with grammatical rules proper began. It is not that linguistic intelligence cannot be differentiated from social intelligence; it is that this intelligence evolved within the context of social interactions. Mithen accepts the theory that language evolved as the size of hominid groups increased and as social interactions become more complicated. Individuals with better communication skills were selected for their advantage in developing better sounds of communication; that is, better ways to acquire social knowledge about other individuals and about allies and enemies in the pursuit of sexual favors and status. Mithen’s metaphorical illustrations of the mind as a cathedral (67) should have kept the doors between social and linguistic intelligence open from the beginning.

There is something else that stands out about the chapel of social intelligence: it was the domain responsible for the development of consciousness, reasoning, and reflection about one’s cognitive processes and psychological states. Mithen follows Nicholas Humphrey’s argument that “consciousness evolved as a cognitive trick to allow an individual to predict the social behaviour of other members of his or her group” (147). He writes:

At some state in our evolutionary past we became able to interrogate our own thoughts and feelings, asking ourselves how we would behave in some imagined situation. In other words, consciousness evolved as part of social intelligence (147).

Mithen extends this idea from Humphrey to argue that in the degree to which the social intelligence module in the Neanderthal mind was closed off from toolmaking and the thinking associated with the natural world, “Neanderthals had no conscious awareness of the cognitive processes they used in the domains of technical and natural history intelligence” (147). But how could Neanderthals have gone about making handaxes, foraging plants, and hunting animals without being conscious? It was Jaynes who first argued that humans can perform all sorts of rationally-ordered activities while being unconscious, and who made a distinction between reasoning or thinking and consciousness. Mithen provides examples of “unconscious thought” similar to ones provided by Jaynes, though there are no indications he has read Jaynes. However, Mithen illuminates well Jaynes’ line of reasoning, showing how “many complex cognitive processes go on in our minds about which we have no awareness . . . For example, we have no conscious awareness of those processes we use to comprehend and generate linguistic utterances.” The Swiss-army-knife-like mind of Neanderthals went about making handaxes, foraging, and hunting without conscious awareness of the cognitive process they were employing. “There was no introspection” in the domains of technical and natural history intelligence. This explains “the monotony of industrial traditions, the absence of tools made from bone and ivory, the absence of art” (150). But once the chapels of technical and natural history intelligence were opened to the chapel of social intelligence with its consciousness, there was a “cultural explosion,” a “frenzy of activity, with more innovation than in the previous 6 million years of human evolution” (152).

“Cognitive fluidity is characteristic of the modern mind – a capacity to integrate ways of thinking and stores of knowledge to generate creative ideas and which underlies the pervasive use of metaphor and analogy in human thought” (Mithen 1996).

Cognitive Fluidity in our Bicameral Cultural Marxist Kingdom

How could Jaynes have said that the minds of such advanced peoples as the Mesopotamians and Egyptians was lacking in consciousness, populated by individuals without “an interior self”? Jaynes was looking at a different period in history, the ancient world of the first civilizations, and in this world he saw powerful divine authorities and powerful gods directing and controlling the thought-processes of the population, existing as if they were voices inside their heads. The inhabitants of these civilizations had a “bicameral mind” because the cognitive functions were divided between the part of the brain that was controlled by external-mandates from divine authorities, which appear to be “speaking” inside their heads, and the part of the subdued self, which listens and obeys without thinking about the commands and without questioning the voices inside his head. It is worth thinking about Jaynes’ claim that in this bicameral era, “there were no private ambitions, no private grudges, no private frustrations, no private anything, since bicameral men had no internal ‘space’ in which to be private, and no analog ‘I’ to be private with. All initiative was in the voice of the gods” (205).

I already questioned the reasons Jaynes offers for the breakdown of the bicameral mind in a forthcoming Introduction for a translation of the Iliad. As much credit as Jaynes deserves for realizing that consciousness is not something produced ready-made through natural selection, but is a product of history, he took it for granted that once the bicameral mind broke down, the conscious mind emerged in its complete form. He was not a historian, but a psychologist, and thus not well aware of the amazingly different history of Western individualism and cultural creativity. Mithen is an archaeologist who thinks that the attainment of cognitive fluidity by H. sapiens sapiens some 60,000 years ago was a one-time phenomenon; the doors of the chapels opened in one full swing, we are made to think, and once they did, humans became fully conscious.

But one has to ask – and the more so in light of the far greater cultural explosion we would witness in ancient Greek times, and thereafter continuously among Europeans – if Mithen was willing to conceptualize the possibility that since there are different “orders of consciousness” between chimpanzees, Neanderthals, and H. sapiens sapiens, why not between men in vastly different historical periods and cultural settings? Even if we were to start with the self as it manifested itself in archaic Greece, we would still not have a fully developed self. Mithen is too absolutist in thinking that cognitive fluidity emerged in full bloom during the Upper Paleolithic; Jaynes is too absolutist in thinking the bicameral mind broke down during the first millennium BC, and so is Snell (and Zeruneith) in thinking that consciousness was completely “liberated” by the time we get to Socrates and Plato. Subjective consciousness, and degree of fluidity between the intelligences, develop very gradually, in a variety of ways, depending on the cognitive process one is considering.

Mithen thinks the Upper Paleolithic “cultural explosion” was the major period of creativity in human history. He ends with an epilogue minimizing the significance of the origins of agriculture. He ignores the entire history of innovations thereafter, but takes it as a given that once cognitive fluidity was evident 60,000 years ago, the age of computers would be engendered by humans with equal cognitive fluidity across the world. His view of humans and history is framed by his evolutionary psychological view that the mind is a product of biological evolution, and that the cognitive modules and the evolution of cognitive fluidity became hard-wired into the mind and universal among all the peoples of the planet 60,000 to 30,000 years ago. Much like every other archaeologist of human evolution, he takes it as a given that the fundamental changes in the temporal existence of humans happened during their evolutionary history up until the Upper Paleolithic. (I should say parenthetically that Mithen accepts the mandated view of Stephen Jay Gould and Richard Lewontin that biological change somehow ceased after this point in time.)

He also evinces an inclination to view hunting-and-gathering cultures as benevolent, as a time in which humans were somehow living communally without malice, simply surviving and evolving; occasionally fighting over status, but otherwise, pretty loving creatures. He even suggests that these cultures, and “traditional, non-Western cultures,” achieved a higher level of cognitive fluidity than the specialized cultures of the contemporary West. I am not exaggerating. He surmises a higher degree of fluidity from the fact that hunter-gatherers thought “of their natural world as if it were a social being,” and did not view the natural and the human as separate, rather viewing all the domains of life, persons, society, and environment as one world. Seeing the world as consisting of two separate entities, the material and the spiritual, is an indication that one is using a different blade, or cognitive module, for each realm of reality. He writes:

The overwhelming impression from the descriptions of modern hunter-gatherers is that all domains of their lives are so intimately connected that the notion that they think about these with separate reasoning devices seems implausible (49).

In a footnote, he then refers approvingly to Ernest Gellner’s view that this unified vision of hunter-gatherers, and of non-Western traditional cultures, “reflect a complex and sophisticated cognition which serves to accomplish many ends at once” (233). He then concludes that the separation of nature, society, and technology “is a product of Western thought. Modern hunter-gatherers make no such distinctions and exhibit unrestrained cognitive fluidity” (233).

This amazingly confounding statement can make sense only in light of the obligation academics in the West have to say very positive things about non-Western and primitive minds. How can any straight-thinking person possibly suggest that hunter-gatherers, and non-Western peoples, have more cognitive fluidity simply because of their undifferentiated view of the world? It seems logical to me that increasing cognitive fluidity does not preclude further specialization of the intelligences, but actually encourages it. The history of knowledge, which is almost entirely a history of European knowledge, given that Europeans are responsible for the development of all the specialized disciplines taught in our universities, is proof of this. Metaphorical and analogical connections become the norm in European thinking at the same time that Europeans start to make clear distinctions between different orders of reality and to generate particular disciplines for the study of each part of reality, starting with the ancient Greeks. Differentiation is central to the incredible accomplishments of the West in multiple domains, and there can be no modernity without differentiation of functions, laws, offices, disciplines, and so on. This differentiation was made possible by the greater cognitive fluidity of the Western mind, for knowing that there are separate faculties and separate worlds requires a higher level of awareness within each of the domains of intelligence, of one’s own reasoning within that domain, and of what particular methodologies are best to employ in one domain as contrasted to another domain.

This is not an understandable mistake on Mithen’s part. It is a result of the reality that academics today conduct their research within a tight ideological grid that prohibits dissent against the program of diversity. The pervasiveness of this ideology is evident in the fact that all Western universities have made it their “mission” to promote mass immigration, diversification of the curriculum, hiring of foreigners who are not white, and in the tendency of natural scientists to give implicit support to this ideology even in their otherwise scientific studies. Mithen thus provides an inbox with the heading, “Racist attitudes as a product of cognitive fluidity.” It is a simple-minded section that is out of place in an otherwise excellent book. The idea is that integrating technical intelligence (which involves concepts about manipulating objects of nature) with social intelligence (which involves thinking about human relations) may result in thinking of people as objects to be manipulated, which may result in treating some people as “less than human” rather than as human beings with inherent rights.

But did not Mithen tells us that “manipulation” of others in the pursuit of one’s interests is a component of social intelligence? Notice how he suddenly redefines social intelligence to mean treating others as ends rather than as means, projecting a modern Western concept of human rights onto the meaning of social intelligence. I will say a few things below about this redefinition of social intelligence to mean empathy for “strangers.”

It is also the case that Mithen feels compelled to downgrade the meaning of science in order to compel white students to believe that science has always been a common endeavor across the world ever since cognitive fluidity emerged in Upper Paleolithic times. He wants students to believe that science is merely about “the development and use of tools to solve specific problems” (214). Hunter-gatherers did not make telescopes and microscopes, but they did make tools which integrated natural history knowledge and technical knowledge. Mithen should know that the reason he opted for the concept “technical intelligence” rather than the concept Gardner uses, “logical-mathematical intelligence,” is that the latter applies to the modern world, whereas the former term reflects better the simpler scientific mind of people living by hunting and gathering. Perhaps wondering why these primitives did not actually develop any of the sciences we teach in our universities, he settles for the claim that “the potential to develop a scientific technology emerged with cognitive fluidity” (214). But the scientific issue is whether this potential guaranteed the emergence of scientific technology across the world, and whether all peoples developed the same degree of cognitive fluidity and the same level of specialized intelligences.

He would have us believe that the moment cognitive fluidity emerged in prehistorical times, “analogy and metaphor [came to] pervade every aspect of our thought and [to] lie at the heart of art, religion and science” (215). He refers to Thomas Kuhn’s claim that “the role of metaphor in science goes far beyond that of a device for teaching and lies at the heart of how theories about the world are formulated,” but he forgets to tell readers that all the theories and metaphors Kuhn wrote about were developed only by Europeans. In a footnote, he then criticizes the claim that science is primarily a product of the West, endorsing the multicultural view that science was just as developed in traditional non-Western societies, approvingly citing someone claiming that “science is a genuine universal, characteristic of all advanced life-forms” (261). So even animals who happen to be “advanced” are scientists; this is how far academics are willing to go in their effort to downgrade their own Western cultures so as to encourage white students to welcome their replacement by immigrants. The statistics, however, are quite definitive: Europeans account for ninety-seven percent of scientific accomplishment in history.

This inability to evaluate in an honest and straightforward way the emergence of a true sense of self since ancient Greek times, and the subsequent development of selfhood thereafter, which led to the capacity for making clear distinctions between the domains of intelligence and the respective methodologies required for each domain, can be explained as a product of the increasing subjection of Western academics to the bicameral kingdom of Cultural Marxism. Academics today are the slaves of voices that command them to “fight racism,” and to repeat forever in their minds the commandment that “diversity is our strength,” that “Western ethnocentrism is morally pernicious,” that “all cultures are equal,” and that “race is a construct.” It is not that they are lacking in subjective consciousness similarly to the men living in ancient theocratic societies. They are conscious in many areas of life, except in the areas of race, white identity, and Western uniqueness. When it comes to these subjects, they cease to have “a self that is responsible and can debate within itself” (Jaynes, 79). They become subservient, in a blindly habitual way, to the religiously unquestioned mandates of diversity. The threatening voices of diversity instill fear of ostracism, loss of status, the cutting back of grants, and job loss.

Something else is going on. The social intelligence of whites, the prisoners of this bicameral kingdom, has become soft like a child’s teddy bear, and empathetic. Today, many can’t take seriously the idea of social intelligence because of the way it has come to be redefined as the emotional ability to sympathize with others – to put ourselves in someone else’s place and try to empathize with their feelings. Daniel Coleman, in his New York Times bestseller, Social Intelligence: The New Science of Human Relationships (2006), told millions of impressionable Europeans that they should suppress the “dark side” of their social intelligence, the Machiavellian “psychopathology” of calculating and promoting their in-group interests, in the name of a more “civilized” and “altruistic” intelligence that would make them empathetic towards immigrant outgroups. But social intelligence, as Mithen well explains, is about “extensive social knowledge about other individuals, in terms of knowing who allies and friends are, and the ability to infer the mental states of those individuals” (82-3). It has to do with using our own minds and behavioral reactions as a model to read the minds and predict the behaviors of others. It is an intelligence that evolved in the struggle for survival within and between groups; it is about ambition, manipulation, sexual privileges, power, and about knowing who your friends really are.

Thinking that others think and behave in the same way as we do has been indispensable to our evolutionary enhancement. If other humans thought in a manner that was very different from ourselves, we would not be able to develop this social intelligence. It is very difficult for us to think that other people do not have the same mind as ours. But this is precisely the problem Europeans find themselves in today. Through their much higher cognitive fluidity and intense sense of introspection and critical consciousness, they have created a society in which tribal identities are weaker and discredited, for the sake of a hyper-individualism in which everyone is to be judged in abstraction from their group identities. This “libertarian” culture worked as long as Europeans were the only players, but now millions of immigrants from non-Europeans cultures, together with hostile elites in charge of the media, who do not think in this individualistic way have weaponized this moral universalism to obligate Europeans to treat outsiders as equal individuals, cunningly employing their social intelligence to promote their own tribal interests. It is not that the Darwinian component of social intelligence has disappeared altogether among Europeans. There is reason to hope that populist nationalism and white identity politics will continue to grow, and then Europeans will be able to enjoy their higher cognitive fluidity within their own independent homelands.

This article was reproduced from the Council of European Canadians Website.

8 comments

“Only with the Indo-Europeans, and then at a very rapid pace with the ancient Greeks, did humans began to overcome the dark world of mysterious forces and unquestioned norms enveloping their subjective consciousness. ”

I think the general argument is correct that this is a uniquely IE development, but I think it occurred earlier, because we can see that other groups like the Celts and Germanics had a similar epic poetry tradition. The only difference is that we have it preserved from an earlier date in Greece since it was written down so much earlier. But it’s quite clear that all IE cultures had a similar tradition of aristocratic heroism and conscious self.

I agree, and write about this in Uniqueness, the similar aristocratic poetic tradition of the Celts and Germans. This poetic tradition is already evident in the prehistoric Indo-Europeans before the Celts and Germanic peoples are differentiated.

The self – what it is, how it generates the thinking mind, how it relates to what we perceive as reality – is of course the central theme of the major Oriental religions. The lucidity and elegance of the core theory is far beyond anything that Western man has produced in the last 2,500 years.

I have yet to find in ancient, medieval, and modern Eastern philosophy a concept of self that is not commingled with other concepts or spheres of reality. I examined the Chinese lack of such a concept in two prior articles posted here. If you are thinking of Indian philosophy, the Upanishadic concept of “ātman” combines self, breath, spirit, and body, in a way that may be compared to the lack of distinction between breathing, soul, emotions, and mind in the Iliad. The concept of ātman refers interchangeably to self, life-force, consciousness, and ultimate reality.

If the self is in fact commingled with other spheres of reality, then you’ve just made falsity the main criterium on which you judge the value of a school of thought.

“Academics today are the slaves of voices that command them to “fight racism,” and to repeat forever in their minds the commandment that “diversity is our strength,” that “Western ethnocentrism is morally pernicious,” that “all cultures are equal,” and that “race is a construct.” It is not that they are lacking in subjective consciousness similarly to the men living in ancient theocratic societies. They are conscious in many areas of life, except in the areas of race, white identity, and Western uniqueness. When it comes to these subjects, they cease to have “a self that is responsible and can debate within itself” (Jaynes, 79). They become subservient, in a blindly habitual way, to the religiously unquestioned mandates of diversity. The threatening voices of diversity instill fear of ostracism, loss of status, the cutting back of grants, and job loss.”

Max Stirner would call these “spooks,” as in having “bats in your belfry.”

The essence of social intelligence would appear to be what Carl Schmitt called “the political”, which is “that which distinguishes between friend and enemy”, but not just for oneself, but for all persons affecting the individual in question.

I wonder if it might be useful to distinguish between “functional empathy” and “pathological empathy.” The former, which is something that successful military commanders often display, is the ability to look at the situation from the point of view of another, whether he be superior, subordinate, ally, or enemy. The latter is the embrace of the interests of another group to the detriment of one’s own.

Comments are closed.

If you have a Subscriber access,

simply login first to see your comment auto-approved.

Note on comments privacy & moderation

Your email is never published nor shared.

Comments are moderated. If you don't see your comment, please be patient. If approved, it will appear here soon. Do not post your comment a second time.